The specifics:

ToolOrchestra trains a "orchestrator" model that, depending on the job, determines when to use specialist tools and models and when to reason internally.

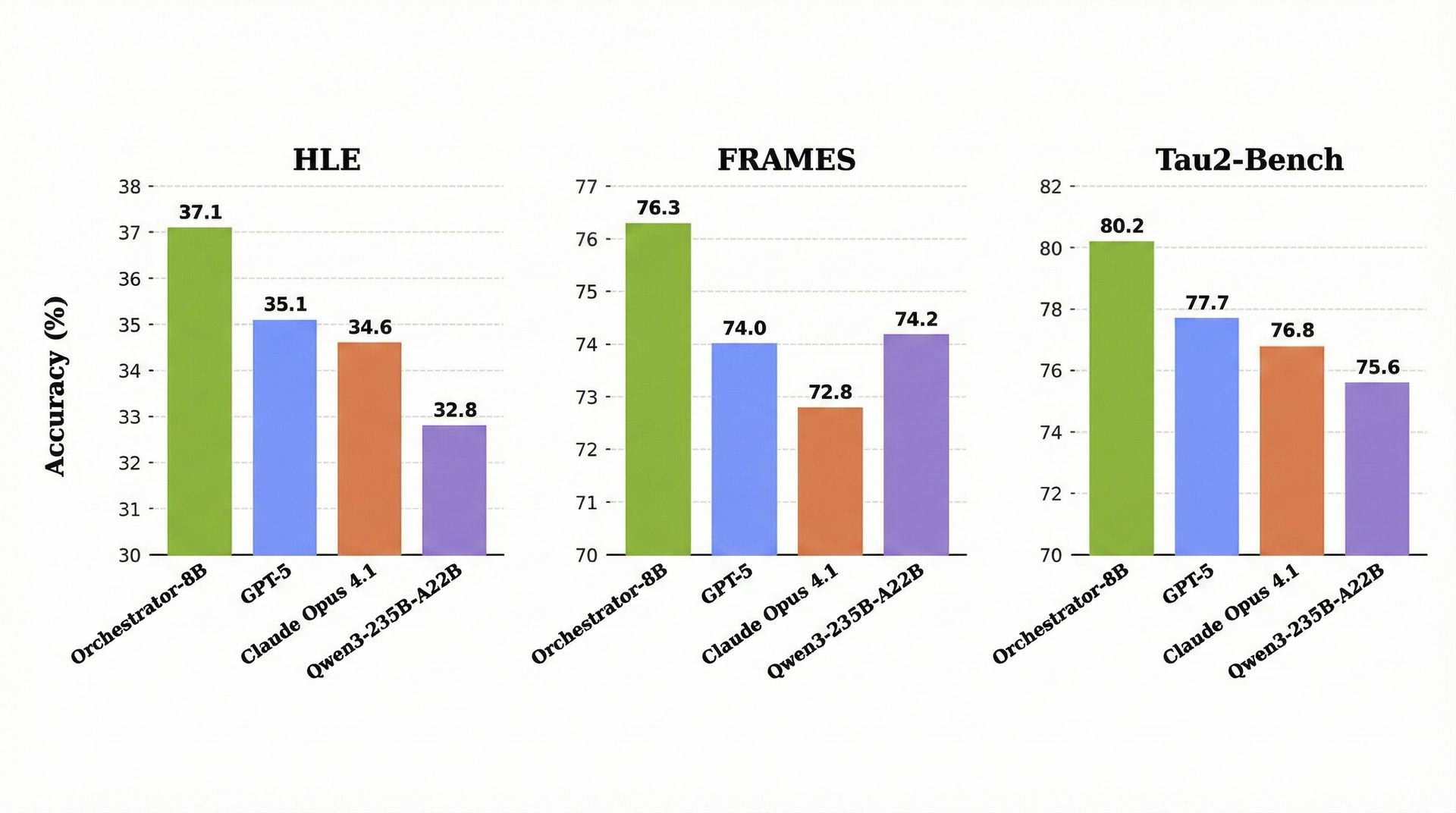

On Humanity's Last Exam, an 8B model trained using the approach outperformed GPT-5 and Claude Opus 4.1, scoring 37.1% while being 2.5 times faster and more efficient.

The orchestrator demonstrated its adaptability to evolving tool sets and price structures even when tested with unseen tools.

By coordinating targeted model and tool usage, ToolOrchestra prevented previous agents from overusing the most powerful (and costly) tools and models.

On Humanity's Last Exam, an 8B model trained using the approach outperformed GPT-5 and Claude Opus 4.1, scoring 37.1% while being 2.5 times faster and more efficient.

The orchestrator demonstrated its adaptability to evolving tool sets and price structures even when tested with unseen tools.

By coordinating targeted model and tool usage, ToolOrchestra prevented previous agents from overusing the most powerful (and costly) tools and models.

ToolOrchestra questions the notion that "bigger is better," in keeping with Ilya Sutskever's previous remarks. NVIDIA demonstrates how small models with coordinating tools can be the way forward rather than a single massive system. The next major advancement in AI will be the smartest model/tool conductor if orchestration outperforms scaling.

Your one-stop shop for automation insights and news on artificial intelligence is EngineAi.

Did you like this article? Check out more of our knowledgeable resources:

📰 In-depth analysis and up-to-date AI news .

🤝 Visit to learn about our goal and knowledgeable staff.

📬 Use this link to share your project or schedule a free consultation.

Watch this space for weekly updates on digital transformation, process automation, and machine learning. Let us assist you in bringing the future into your company right now.

Did you like this article? Check out more of our knowledgeable resources:

📰 In-depth analysis and up-to-date AI news .

🤝 Visit to learn about our goal and knowledgeable staff.

📬 Use this link to share your project or schedule a free consultation.

Watch this space for weekly updates on digital transformation, process automation, and machine learning. Let us assist you in bringing the future into your company right now.