OpenAI Frontier: The Enterprise AI Platform Turning Artificial Intelligence into Managed Digital Workforce

OpenAI's new Frontier platform doesn't just deploy AI agents—it onboards them, evaluates their performance, and governs their access like human employees. Here's how the "Workday for AI" is reshaping enterprise automation.

The enterprise AI landscape shifted dramatically on February 5, 2026, when OpenAI unveiled Frontier—a platform that transforms how organizations deploy, manage, and scale artificial intelligence agents. Unlike previous AI tools that functioned as isolated assistants, Frontier treats AI agents as integrated digital coworkers, complete with employee-like identities, structured onboarding processes, performance evaluation frameworks, and granular permission controls.

This isn't merely a product launch; it's OpenAI's strategic gambit to capture the "orchestration layer" of enterprise AI—a market Gartner recently called "the most valuable real estate in AI." With early adoption from Fortune 500 giants including HP, Oracle, State Farm, and Uber, Frontier signals a fundamental shift from experimental AI pilots to production-grade AI workforce management.

The Enterprise AI Problem: Fragmentation and Isolation

Frontier addresses a critical pain point that has plagued enterprise AI adoption: system fragmentation. Organizations have spent years deploying AI agents across disparate systems—chatbots in customer service, coding assistants in engineering, analytics tools in finance—but these agents operate in silos, lacking shared context and creating operational complexity rather than reducing it.

"What's really missing still, for most companies, is just a simple way to unleash the power of agents as teammates that can operate inside the business without the need to rework everything underneath," explained Denise Dresser, OpenAI's chief revenue officer, during the platform's launch briefing.

The consequences of this fragmentation are severe. Each new agent requires custom integrations, maintains separate context about the business, and operates without awareness of other AI systems. Security teams struggle to enforce consistent governance. Operations teams can't measure aggregate performance. And business users face a patchwork of AI interfaces rather than unified intelligent assistance.

Frontier solves this by creating what OpenAI calls a "semantic layer for the enterprise"—a unified intelligence infrastructure that connects CRM systems, ticketing platforms, data warehouses, and internal applications into a shared business context accessible to all managed agents.

The Digital Employee Lifecycle: Onboarding, Permissions, and Performance

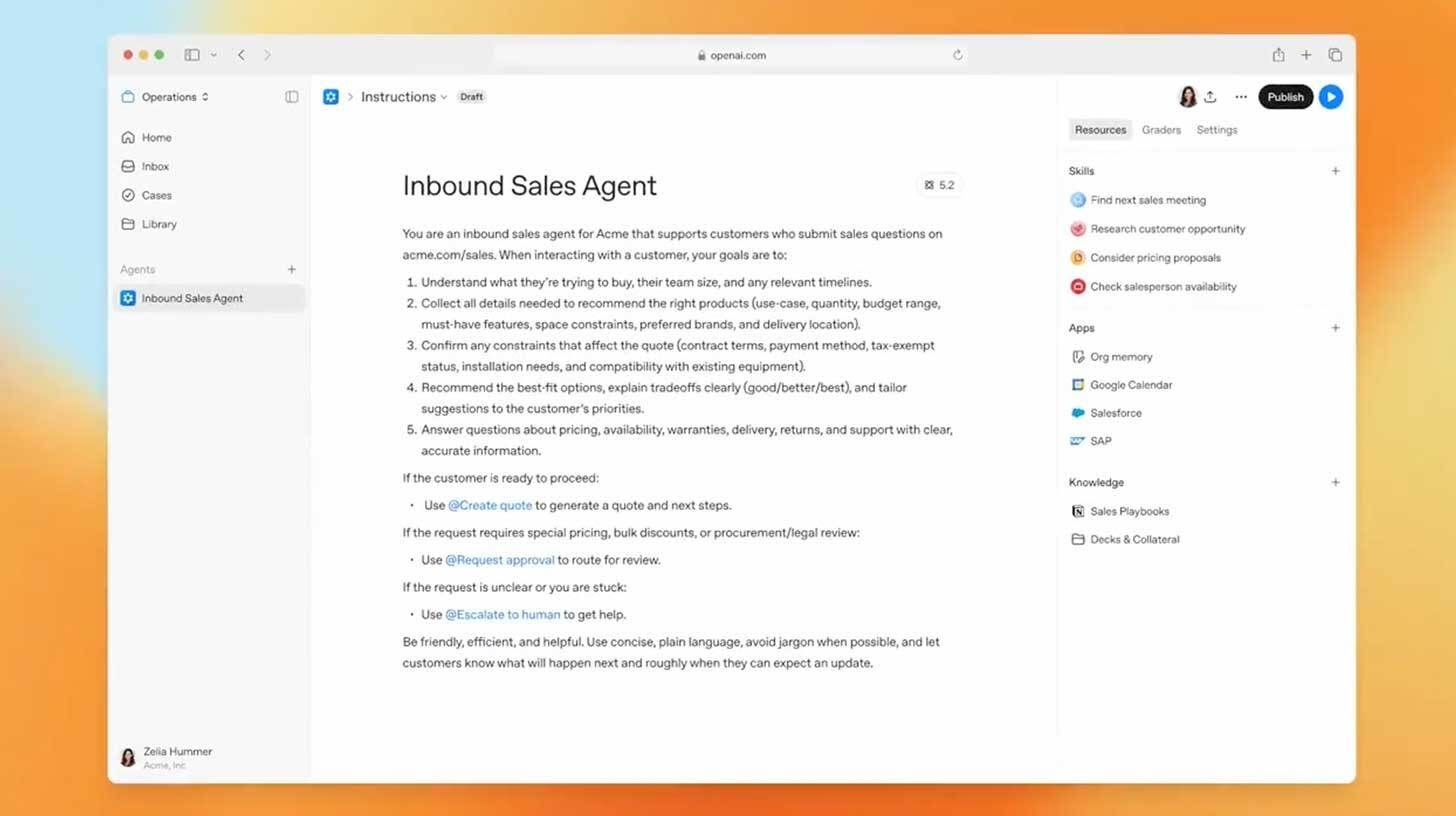

Frontier's architecture mirrors human resource management systems, treating AI agents as employees rather than software tools. This approach makes AI governance intuitive for enterprise operations teams while addressing the compliance and security requirements of regulated industries.

Structured Onboarding and Institutional Knowledge

When a new AI agent joins the Frontier platform, it undergoes formal onboarding similar to human employee orientation. The agent receives structured training on company policies, data schemas, workflow rules, and domain-specific institutional knowledge. This process ensures agents understand organizational context before accessing production systems.

The onboarding includes exposure to "institutional memory"—historical decision patterns, approved communication templates, and business logic accumulated from previous agent interactions. This memory layer allows agents to learn not just from their own experiences but from the collective operational history of the organization.

Identity and Access Management

Every Frontier agent operates under its own distinct identity with explicitly scoped permissions. Enterprise Identity and Access Management (IAM) systems cover both human employees and AI agents, enabling unified governance across the workforce. Security teams can define exactly which systems each agent can access, what actions it can perform, and what data it can view or modify.

This granular control addresses the "black box" concern that has slowed AI adoption in regulated industries. Financial services firms can ensure trading agents only access market data, not customer PII. Healthcare organizations can restrict clinical agents to specific patient populations. And all actions are logged with full audit trails for compliance reporting.

Continuous Evaluation and Feedback Loops

Perhaps Frontier's most innovative feature is its built-in evaluation infrastructure. The platform continuously monitors agent performance on real operational tasks, measuring success rates, accuracy, latency, and business impact. This data feeds into structured feedback loops where agents improve through experience, guided by human reviewers who provide corrective input.

"What we're fundamentally doing is basically transitioning agents into true AI co-workers," explained Barret Zoph, OpenAI's general manager of business-to-business. "They learn via experience, with built-in evaluation comparing it to onboarding a new employee with reviews and boundaries."

This creates a virtuous cycle: agents perform work, their performance is measured against outcomes, feedback identifies improvement areas, and the agents refine their approach. Over time, this produces increasingly capable AI workers that understand organizational nuances and optimize for specific business objectives.

Technical Architecture: The Semantic Layer and Multi-Vendor Support

Frontier's technical design reflects sophisticated understanding of enterprise IT realities. Rather than forcing organizations to abandon existing AI investments or migrate data to OpenAI's infrastructure, Frontier operates as an open, extensible layer that integrates with diverse technology ecosystems.

Shared Business Context

At Frontier's core is a semantic layer that ingests and normalizes data from across the enterprise. When agents need information, they query this unified context rather than maintaining separate connections to each source system. A customer service agent can simultaneously access CRM records, billing history, support tickets, and product documentation through a single, coherent interface.

This approach eliminates the integration redundancy that has characterized enterprise AI deployments. Instead of connecting each agent individually to Salesforce, Snowflake, Jira, and ServiceNow, organizations connect these systems once to Frontier, and all agents benefit from the shared infrastructure.

Multi-Vendor Agent Support

Frontier's most strategically significant architectural decision is its openness to non-OpenAI agents. The platform manages AI workers built on Anthropic's Claude, Google's Gemini, Meta's Llama, or custom fine-tuned models with equal facility. This multi-vendor support eliminates lock-in concerns and allows enterprises to select the best model for each specific use case while maintaining unified governance.

"We're not going to build everything ourselves," stated Fidji Simo, OpenAI's CEO of Applications. "We are going to be working with the ecosystem to build alongside them, and we embrace the fact that enterprises are going to need a lot of different partners."

This openness represents a calculated strategic bet. By welcoming competitors' agents onto its platform, OpenAI concedes the agent layer to specialized providers while positioning Frontier as the essential coordination infrastructure—the "AWS of enterprise AI" that captures value regardless of which models power individual agents.

Flexible Execution Environments

Frontier agents can execute across diverse infrastructure: local enterprise environments, private cloud deployments, or OpenAI-hosted runtimes. This flexibility accommodates organizations with strict data residency requirements, air-gapped security needs, or existing cloud commitments. The platform handles task queuing, retry logic, and state persistence, ensuring long-running agent workflows complete reliably even across system interruptions.

Early Adoption: Fortune 500 Validation

Frontier launched with an impressive roster of enterprise early adopters, demonstrating immediate traction in the market's most demanding segments:

HP: Integrating Frontier across IT service management and supply chain operations

Oracle: Deploying agents for database administration and enterprise application support

State Farm: Implementing AI agents for claims processing and customer service workflows

Uber: Utilizing Frontier for marketplace optimization and driver support automation

Intuit: Powering tax preparation assistance and financial advisory services

Thermo Fisher Scientific: Automating laboratory data analysis and research workflows

Beyond these launch partners, OpenAI has embedded Forward Deployed Engineers within customer organizations—a practice borrowed from successful enterprise software companies like Palantir. These engineers work alongside client teams to develop best practices, troubleshoot integration challenges, and feed operational insights back to OpenAI's product development teams.

This high-touch approach reflects the reality that enterprise AI deployment remains as much organizational change management as technical implementation. Companies don't just need working software; they need expertise in restructuring workflows, retraining staff, and establishing new governance protocols.

The Competitive Landscape: Orchestration Wars

Frontier's launch intensifies an emerging battle for control of the enterprise AI orchestration layer. While OpenAI and Anthropic have competed primarily on model capabilities and coding tools, Frontier signals that the fight is expanding to who controls the infrastructure underlying AI coworkers.

Anthropic's Counter-Position: Vertical Integration

Anthropic has pursued a different architectural philosophy with its Cowork platform, emphasizing deep vertical integration rather than horizontal openness. Rather than building a management layer above all agents, Anthropic extends orchestration capabilities outward from Claude itself, creating tightly integrated experiences for specific business functions.

This approach bets that agent quality remains meaningfully differentiated when embedded within orchestration—that enterprises will prefer superior Claude-powered workflows over generic management of interchangeable agents. It's closer to Apple's ecosystem strategy: control the end-to-end experience, build switching costs through workflow integration, and compete on execution quality.

The Incumbent Response: Salesforce, Microsoft, and ServiceNow

Traditional SaaS vendors aren't ceding this territory without resistance. Salesforce's Agentforce, launched in late 2024, already manages AI agents within its CRM ecosystem. Microsoft integrates agent capabilities across Office 365 and Azure. ServiceNow embeds AI workflow automation in IT service management.

These incumbents possess advantages Frontier lacks: existing customer relationships, deep workflow integration, and decades of enterprise trust. However, they face a structural challenge—their agent platforms work best within their own ecosystems, while Frontier promises unified management across all enterprise systems.

The consulting partnerships OpenAI announced—with McKinsey, BCG, Accenture, and Capgemini—directly threaten SaaS vendors by co-opting the systems integrators that traditionally implement enterprise software. As these firms build Frontier practices and certify thousands of consultants on OpenAI technology, they create a distribution channel that bypasses incumbent sales motions.

Implications for Enterprise Operations

Frontier's emergence carries profound implications for how organizations structure work, manage technology, and allocate human capital.

The Shift from Tool to Teammate

Traditional enterprise software augmented individual productivity—Excel for analysts, Salesforce for sales reps, Jira for developers. Frontier enables a shift from individual augmentation to team transformation, where AI agents become colleagues that handle specific responsibilities within collaborative workflows.

This changes hiring patterns. Instead of adding headcount for data entry, ticket triage, or report generation, organizations deploy Frontier agents. Human workers shift from execution to orchestration—defining objectives, reviewing agent outputs, handling exceptions, and refining agent training.

Governance at Scale

As AI agents proliferate—potentially numbering in the thousands for large enterprises—manual oversight becomes impossible. Frontier's automated governance, with its identity management, permission scoping, and audit logging, provides the infrastructure for AI workforce management at scale.

This addresses the "shadow AI" problem, where employees deploy unauthorized AI tools without IT oversight. By offering a sanctioned, governable alternative that doesn't sacrifice capability, Frontier channels AI adoption into controlled pathways.

The Feedback-Driven Organization

Frontier's evaluation infrastructure creates systematic mechanisms for operational improvement. Every agent action generates performance data; every outcome feeds into optimization loops. Organizations become learning systems where AI agents continuously improve based on operational experience.

This contrasts with traditional software deployments, where capabilities remain static until the next version release. Frontier agents evolve in production, becoming more effective as they accumulate organizational context and receive human feedback.

Challenges and Considerations

Despite its promise, Frontier faces significant adoption challenges that organizations must navigate.

Integration Complexity

Connecting Frontier to existing enterprise systems requires substantial technical effort. Legacy systems may lack APIs, requiring custom integration development. Data quality issues in source systems propagate into Frontier's semantic layer, necessitating cleansing initiatives. And organizational politics often complicate cross-system data sharing.

Change Management

Deploying AI agents as coworkers disrupts established workflows and job roles. Resistance from employees fearing displacement, managers uncertain about oversight responsibilities, and executives questioning ROI can stall implementations. Success requires sustained change management investment alongside technical deployment.

Vendor Concentration Risk

While Frontier's multi-vendor support reduces model lock-in, adopting the platform creates dependency on OpenAI's infrastructure. Organizations must evaluate whether the governance benefits outweigh the risks of concentrating AI orchestration with a single provider.

The Future of AI Workforce Management

Frontier represents an inflection point in enterprise AI adoption. The conversation is shifting from "What can AI models do?" to "How do we operationalize AI workers at scale?" This operational focus—onboarding, permissions, evaluation, governance—reflects the maturation of enterprise AI from experimental technology to production infrastructure.

The platform's success will depend on OpenAI's ability to execute its open-ecosystem strategy while maintaining security and performance standards. If Frontier becomes the default orchestration layer for enterprise AI—managing agents from OpenAI, Anthropic, Google, and beyond—it captures enormous value regardless of which models dominate specific use cases.

For enterprise leaders, Frontier offers a pathway from AI pilot programs to production deployment at scale. The employee-management metaphor provides familiar frameworks for governing unfamiliar technology. And the promise of AI coworkers that improve through experience—learning organizational context, refining their approach, and operating within defined boundaries—addresses the capability-governance tension that has constrained enterprise AI adoption.

The model wars between OpenAI and Anthropic continue, but Frontier demonstrates that the ultimate prize isn't just the best model—it's the platform that orchestrates how all models work together. In the emerging AI economy, the operating system for digital labor may prove more valuable than the laborers themselves.

As Sam Altman noted when announcing the platform, "We expect this will quickly become core to our product offerings." For enterprises navigating the transition to AI-powered operations, Frontier offers a structured, governable path forward—turning the promise of AI agents into the reality of AI coworkers.

Your one-stop shop for automation insights and news on artificial intelligence is EngineAi.

Did you like this article? Check out more of our knowledgeable resources:

Watch this space for weekly updates on digital transformation, process automation, and machine learning. Let us assist you in bringing the future into your company right now