The specifics:

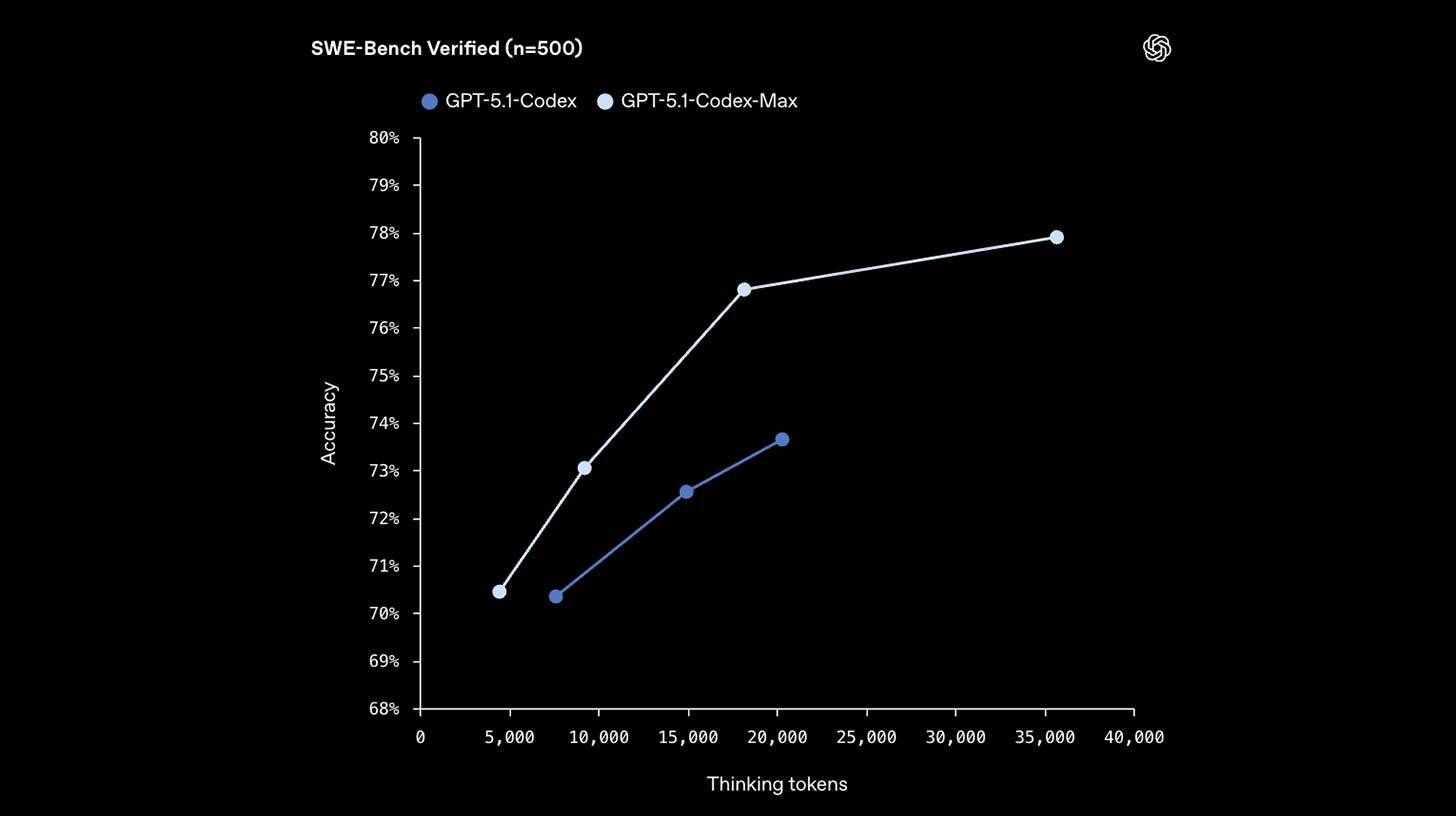

Codex-Max outperforms the new Gemini 3 Pro in coding workloads and demonstrates significant gains over Codex-High throughout development benchmarks.

Through increased reasoning efficiency, the model performs noticeably quicker on real-world tasks while using 30% less tokens than its predecessor.

Max can operate across millions of tokens and for more than 24 hours straight because to compaction, which enables it to "prune" session history while maintaining context.

For Plus, Pro, and Enterprise users, the model is instantly accessible through OpenAI's Codex CLI and IDE extensions; API access will follow shortly.

Coding performance was one of the only areas still trailing, even if Gemini 3 stole the show this week.

Codex-Max, another incremental improvement rather than a major release, raises the bar even more.

The up-only trend in task time capacities for the best AI models is likewise maintained by the 24-hour coding sessions.

Your one-stop shop for automation insights and news on artificial intelligence is EngineAi.

Did you like this article? Check out more of our knowledgeable resources:

📰 In-depth analysis and up-to-date AI news .

🤝 Visit to learn about our goal and knowledgeable staff.

📬 Use this link to share your project or schedule a free consultation.

Watch this space for weekly updates on digital transformation, process automation, and machine learning. Let us assist you in bringing the future into your company right now.

Did you like this article? Check out more of our knowledgeable resources:

📰 In-depth analysis and up-to-date AI news .

🤝 Visit to learn about our goal and knowledgeable staff.

📬 Use this link to share your project or schedule a free consultation.

Watch this space for weekly updates on digital transformation, process automation, and machine learning. Let us assist you in bringing the future into your company right now.