Beyond the Monolith: How Scale AI's SEAL Showdown is Democratizing AI Evaluation

In the rapidly evolving landscape of artificial intelligence, benchmarking has become the currency of credibility. Leaderboards like LMArena have long served as the de facto standard for comparing large language models, offering a seemingly objective measure of performance based on aggregated human preferences. But what if the "best" model isn't the same for everyone? What if a tool that excels for a software engineer in San Francisco falls short for a teacher in Nairobi, or a student in São Paulo? Enter Scale AI with SEAL Showdown, a new benchmarking platform that challenges the hegemony of one-size-fits-all rankings by dividing LLM performance by actual user preferences across demographics. This isn't just another leaderboard; it is a fundamental rethinking of how we measure AI quality—shifting from universal scores to contextual insights, and from elite consensus to global diversity.

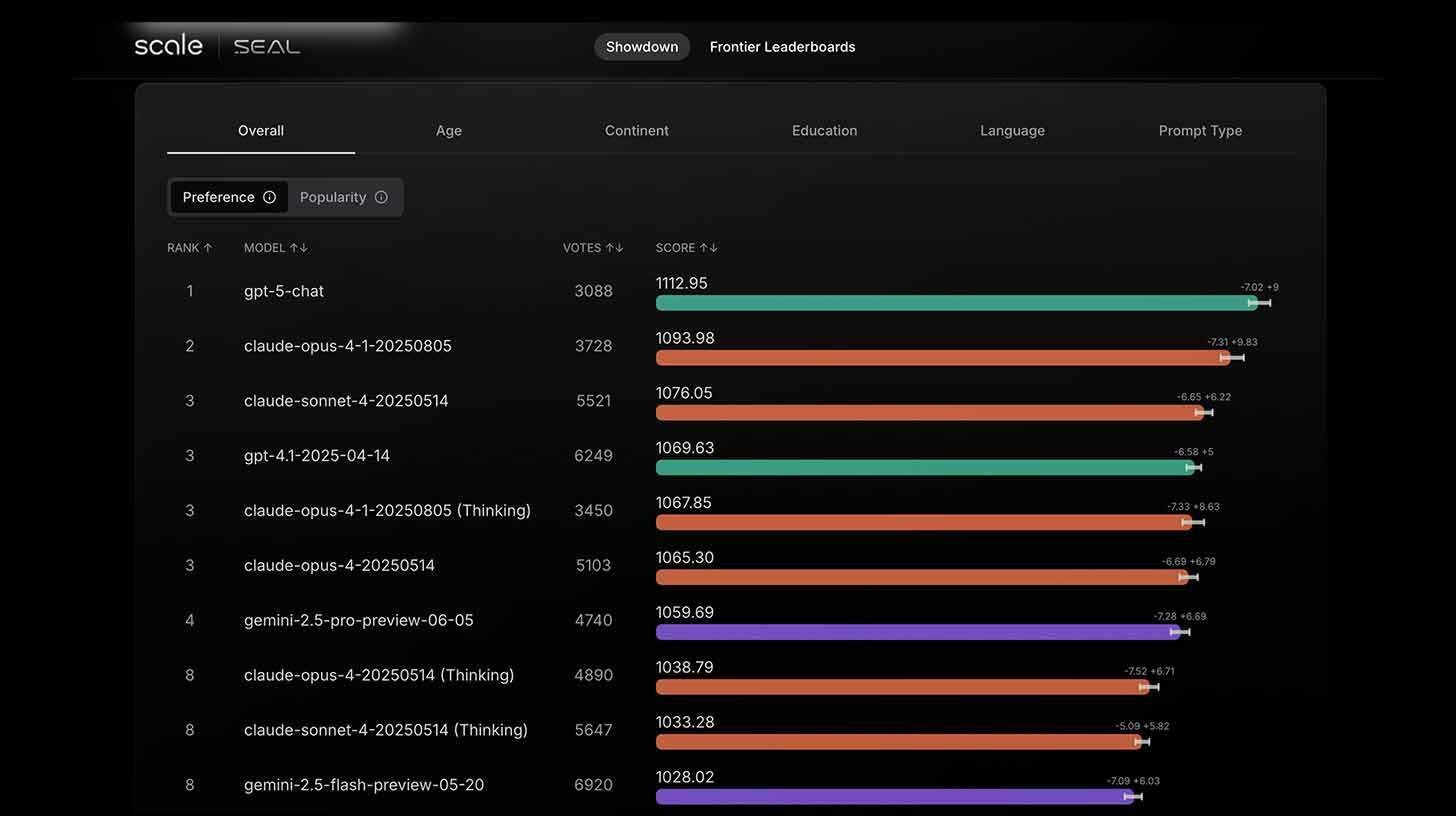

The core innovation of SEAL Showdown lies in its methodology. Rather than relying on a narrow pool of expert evaluators or automated metrics, Scale leverages its global contributor network—spanning 70 languages and 100 countries—to generate preference data from real users in real contexts. Through Scale's Playground app, contributors gain free access to frontier models and can optionally engage in side-by-side comparisons, voting on which output they prefer for a given prompt. This voluntary voting system produces genuine preference data rooted in lived experience, not hypothetical benchmarks. The result is a ranking system that reflects how models actually perform for the people who use them—not just how they perform on curated test sets.

But Scale recognizes that raw preference data is vulnerable to manipulation. To prevent gaming and ensure authentic input, the platform implements several safeguards. Voting is entirely optional, reducing the incentive for bad-faith actors to flood the system with biased ratings. More critically, Scale imposes a 60-day embargo on data sharing after collection, preventing real-time manipulation of rankings based on short-term campaigns or coordinated voting. These measures are designed to preserve the integrity of the feedback loop, ensuring that SEAL Showdown reflects sustained user sentiment rather than transient noise. In an era where benchmark manipulation has become a known risk, this commitment to methodological rigor is essential.

The true differentiator, however, is demographic segmentation. SEAL Showdown's leaderboards are not monolithic; they are divided by user characteristics like age, education level, language, and region. This granularity reveals insights that aggregate rankings obscure. A model that ranks highly overall might underperform for non-native English speakers, or excel for technical tasks but struggle with creative writing. By surfacing these variations, SEAL Showdown empowers users to choose models based on their specific needs, not just global averages. For a healthcare worker in rural India, the "best" model might be one that understands local dialects and medical terminology; for a legal professional in Berlin, it might be one that excels at precise, formal reasoning. Context matters—and SEAL Showdown makes context visible.

This approach addresses a critical blind spot in current evaluation practices. Traditional leaderboards, for all their utility, often reflect the preferences of a narrow demographic: technically proficient, English-speaking, early-adopter users. This creates a feedback loop where models are optimized for that audience, potentially widening the gap between frontier performance and real-world utility for underrepresented groups. SEAL Showdown disrupts this cycle by centering diverse voices in the evaluation process. It acknowledges that AI quality is not absolute; it is relational, shaped by the intersection of capability, culture, and use case.

The strategic implications are significant. For model developers, SEAL Showdown offers actionable intelligence: not just whether a model is "good," but for whom and under what conditions. This can guide targeted improvements, from fine-tuning for specific languages to optimizing for accessibility features. For enterprises deploying AI, it provides a more nuanced basis for vendor selection, reducing the risk of adopting a model that performs well in benchmarks but poorly in production contexts. For researchers, it opens new avenues for studying bias, generalization, and the sociotechnical dimensions of AI performance. In short, SEAL Showdown transforms evaluation from a static scorecard into a dynamic diagnostic tool.

The timing of this launch is no accident. As AI models become more powerful and more widely deployed, the stakes of evaluation rise. A model that generates harmful content for one demographic, or fails to understand critical nuances for another, can have real-world consequences. By introducing competition into the rankings market, Scale AI is not just challenging LMArena's dominance; it is raising the standard for what responsible evaluation should look like. Diversity of perspective, methodological transparency, and contextual relevance are no longer optional—they are essential.

Yet, the platform's success depends on sustained participation. A benchmark is only as good as the data that fuels it, and SEAL Showdown relies on voluntary contributions from a globally distributed network. Scale must continue to incentivize engagement, ensure equitable representation across demographics, and maintain trust through rigorous data governance. The 60-day embargo is a start, but long-term credibility will require ongoing commitment to openness, accountability, and community input.

Looking ahead, SEAL Showdown hints at a broader shift in how we assess AI systems. The future of evaluation may not be a single leaderboard, but a constellation of context-aware metrics that reflect the pluralistic nature of human needs. Models could be rated not just on accuracy or fluency, but on cultural competence, accessibility, and adaptability to local contexts. This would represent a maturation of the field—from measuring what AI can do, to understanding how well it serves whom.

For the global AI community, Scale's initiative is both a challenge and an invitation. It challenges the assumption that one ranking can capture the complexity of model performance. It invites developers, users, and researchers to embrace a more nuanced, inclusive approach to evaluation. The goal is not to discard existing benchmarks, but to complement them with tools that reveal the full spectrum of AI capability.

The age of monolithic leaderboards is ending. In its place rises a vision of contextual intelligence, where evaluation reflects the diversity of the world it serves. SEAL Showdown is more than a competitor to LMArena; it is a statement about the values that should guide AI development: inclusivity, transparency, and respect for difference.

As AI continues to permeate every facet of society, the question is no longer just "Which model is best?" but "Best for whom, and for what?" Scale AI's SEAL Showdown offers a framework for answering that question—not with a single number, but with a richer, more human understanding of performance.

The rankings market now has competition. The evaluation landscape just got more interesting. And for users worldwide, the promise is clear: AI that works for everyone, not just the few.

Your one-stop shop for automation insights and news on artificial intelligence is EngineAi.

Did you like this article? Check out more of our knowledgeable resources:

Watch this space for weekly updates on digital transformation, process automation, and machine learning. Let us assist you in bringing the future into your company right now