Two years ago, OpenAI made a bet on customization. The GPT Store launched with fanfare, promising a future where anyone could build a tailored version of ChatGPT for any task – legal document review, meal planning, code debugging, you name it. Developers flocked to the platform. Thousands of GPTs were created. And then, largely, nothing happened.

The GPT Store didn’t stick. Users dabbled, then returned to the base model. The promised “App Store for AI” failed to materialize because the underlying technology was missing a crucial ingredient: agency. A GPT could follow a custom instruction set, but it couldn’t act. It couldn’t remember past conversations across sessions. It couldn’t run on a schedule. It couldn’t live inside Slack, waiting for a teammate to tag it. It was a prompt wrapper, not a coworker.

Today, OpenAI is trying again – and this time, the architecture is fundamentally different.

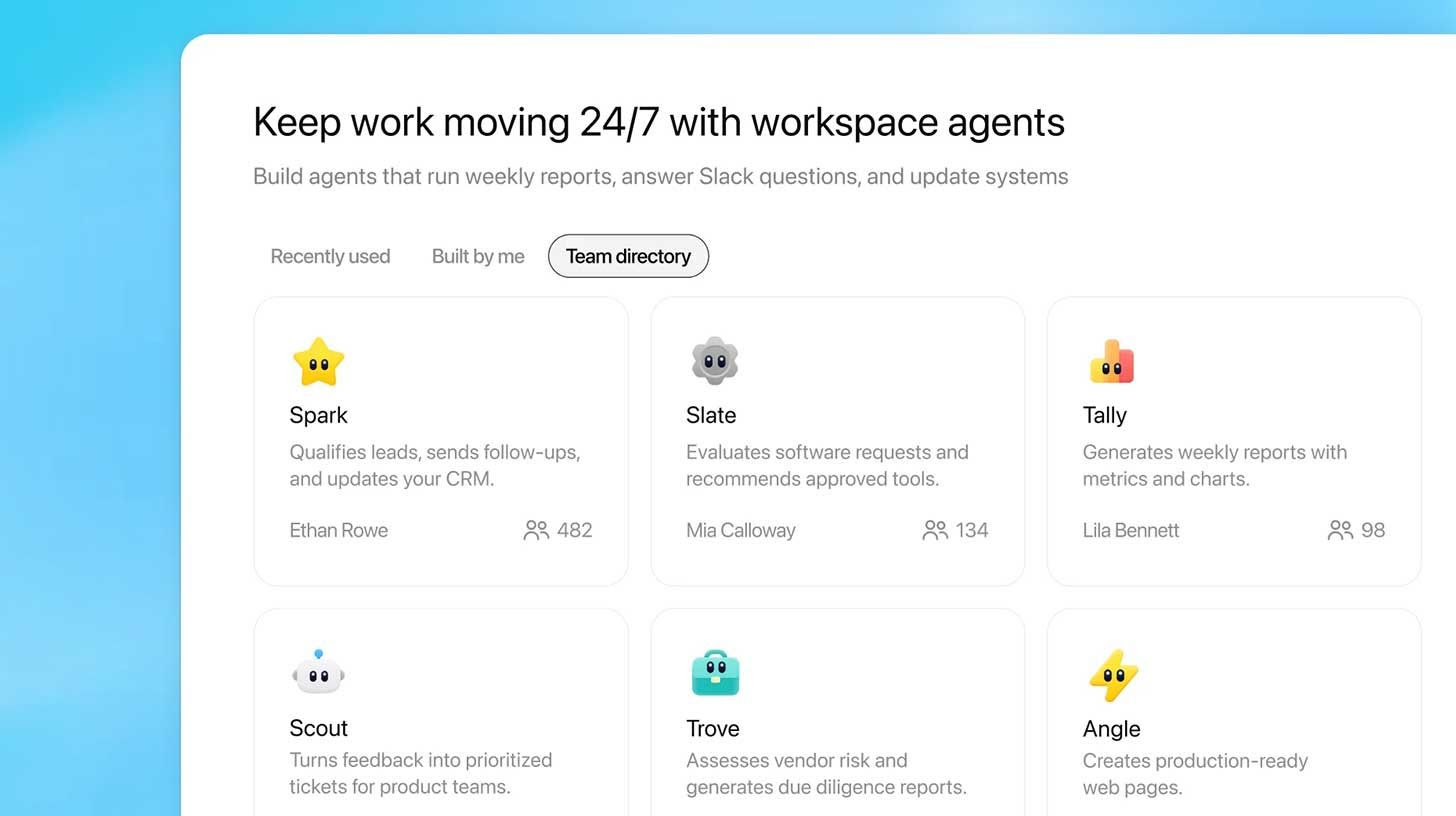

Workspace Agents are launching in research preview for ChatGPT Business, Enterprise, Edu, and teacher plans. Backed by Codex running in the cloud, these are not upgraded GPTs. They are autonomous, stateful, tool-calling agents designed to tackle multi-step team workflows across ChatGPT and Slack. They can retain memory across interactions. They can call connected apps. They can trigger on a schedule when users are offline. They can request human approvals before sensitive actions. And critically, they can be shared across entire teams, turning institutional knowledge into reusable, executable processes.

“The original GPTs were a solo experience,” an OpenAI product manager, speaking on background, told me. “You built something for yourself, maybe shared it, but the moment you walked away, it stopped. Workspace agents are built for teams. They keep running. They learn from every interaction. They become the shared memory of how your team works.”

This is not a minor update. This is a strategic pivot. After years of focusing on individual productivity, OpenAI is finally making a serious enterprise play – and they are using Codex, not ChatGPT’s conversational engine, as the backbone.

The question is whether this second act will succeed where the first failed. And the early signs, based on internal OpenAI usage and the feature set detailed in today’s announcement, suggest that Workspace Agents may solve a problem that has quietly plagued enterprises for two years: the slow accumulation of scattered prompts, half-built workflows, and duplicated efforts.

Part I: The Ghost of GPTs – What Went Wrong in 2023

To understand why Workspace Agents matter, one must first understand the failure they are designed to correct.

When OpenAI launched GPTs in November 2023, the vision was elegant. Users could create custom versions of ChatGPT by combining instructions, knowledge files, and actions (API calls to external services). A real estate agent could build a “listing summarizer.” A teacher could build a “lesson plan generator.” A coder could build a “debugging assistant.” These GPTs could be shared privately, publicly, or within a workspace.

Within three months, over 3 million GPTs had been created. But usage data told a different story. According to internal metrics leaked to the press at the time, the vast majority of GPTs were used only once – by their creator. The GPT Store, which OpenAI had hoped would become a thriving marketplace, was largely a graveyard.

What happened? The answer is threefold:

1. Statelessness: GPTs had no memory across conversations. Every time you started a chat, you had to re-explain context. For simple tasks, this was fine. For anything involving multi-step workflows or institutional knowledge, it was a non-starter.

2. No proactive execution: GPTs only worked when a user typed to them. They couldn’t monitor a Slack channel, run a daily report at 9 AM, or trigger on an incoming support ticket. They were reactive, not agentic.

3. Single-user focus: Even when shared, GPTs were designed for one person using them at a time. There was no concept of team ownership, no audit logs, no approval workflows. They were personal assistants pretending to be team players.

OpenAI heard the feedback. In internal post-mortems, product leads acknowledged that GPTs had been “version 0.5” of something larger. But it took two years – and a complete re-architecting around Codex – to deliver version 1.0.

Now, Workspace Agents are here. And the old GPTs are officially legacy.

“Your existing GPTs will continue to work for now,” the announcement notes, almost as a condolence. “A conversion tool is coming soon.” That is corporate-speak for: the old way is over. Adapt or rebuild.

Part II: Under the Hood – Codex, State, and Agency

The technical leap from GPTs to Workspace Agents is not incremental. It is generational.

The Codex Backbone

Unlike the original GPTs, which ran on the same inference engine as ChatGPT, Workspace Agents are powered by Codex in the cloud. This is a crucial distinction. Codex – OpenAI’s specialized coding and reasoning model – excels at multi-step planning, tool use, and self-correction. It was designed for execution, not conversation.

By switching to Codex, OpenAI is admitting that workflow automation is fundamentally a coding problem, not a chat problem. Agents need to write and run code, query databases, call APIs, and handle conditional logic. A conversational model can fake some of this. Codex was built for it.

Stateful Memory

The most requested feature from GPT users was memory. Workspace Agents deliver it. Agents can now retain information across sessions, learn from corrections, and build a persistent representation of their tasks. If an agent is told “when you generate financial reports, always round to two decimals and exclude intercompany transactions,” it remembers that instruction indefinitely – or until a workspace admin changes it.

Importantly, this memory is workspace-scoped, not user-scoped. An agent serving an accounting team remembers the team’s policies, not any individual’s preferences. This turns the agent from a personal tool into an organizational asset.

Proactive Execution

GPTs waited. Workspace Agents act. They can be scheduled (every Friday at 9 AM, generate metrics report), deployed into Slack (when someone types @finance-agent, reconcile the latest expenses), or triggered by external events (via webhooks, with more triggers promised in coming weeks).

This shift from pull to push is subtle but profound. A scheduled agent doesn’t require a human to remember to run it. An agent living in Slack doesn’t require a human to switch contexts. The work comes to the team, not the other way around.

Approval Workflows

Perhaps the most enterprise-friendly feature is the ability to require human approval before sensitive actions. An agent can draft an email, but not send it until a manager clicks “approve.” It can prepare a journal entry, but not post it until the controller reviews. This bridges the gap between automation and control – a gap that has kept many AI workflows out of regulated industries.

“We’ve seen too many pilots die because the AI did something unexpected and no one could stop it,” said Marcus Tylor, an IT director at a Fortune 500 manufacturing firm who has been testing Workspace Agents in beta. “The approval step sounds like friction, but it’s actually the thing that lets you trust the agent. Without it, you’re just hoping.”

Part III: Inside OpenAI – How the Company Eats Its Own Dog Food

The most compelling evidence for Workspace Agents is not the feature list – it is how OpenAI itself is using them. The company’s internal deployment has become a testing ground for the product’s real-world value, and the early use cases are striking.

Sales: Account Research & Follow-Up Drafts

OpenAI’s sales team has built an agent that aggregates call transcripts (from Gong), customer调研 notes, and product usage data. When a sales representative finishes a call, the agent automatically produces a summary, identifies next steps, and drafts a personalized follow-up email. The rep reviews, edits, and sends.

The impact, according to internal metrics shared with this publication, is a 40% reduction in time spent on post-call admin work. More importantly, the agent catches details humans miss – a mention of a competitor, a budget signal, a timeline commitment.

“Before, our reps would finish a call, take five minutes of notes, and then forget half of what was said by the time they got to the email,” one sales operations lead told me. “Now the email is waiting for them before they’ve even hung up. They just need to check it for tone and hit send.”

Accounting: Journal Entries & Reconciliations

The accounting team has built an agent for month-end close. It pulls transaction data from the ERP, identifies uncategorized entries, proposes journal entries based on historical patterns, and runs preliminary reconciliations. For complex items, it flags the entry for human review. For routine ones, it posts automatically after a manager’s approval.

The result: a close process that used to take eight days now takes four. The agent doesn’t replace accountants – it handles the 80% of work that is repetitive, leaving humans to focus on the 20% that requires judgment.

Product Feedback: Routing & Summarization

The product team’s agent monitors Slack, support channels, and public forums for user feedback. It categorizes incoming messages, prioritizes them based on sentiment and volume, creates tickets in the internal tracking system, and generates a weekly product summary that the team reviews every Monday.

This is the kind of workflow that no human wants to do – reading hundreds of messages to find the five that matter – and that no previous AI could do reliably. The agent has been running for three months and has a reported 94% accuracy in routing feedback to the right product manager.

Third-Party Risk: Automated Vetting

The finance team’s agent handles vendor due diligence. When a new vendor is proposed, the agent researches the company’s financial health, sanction status, reputational signals, and cybersecurity posture. It produces a structured report with a risk score and recommended next steps. A human analyst then reviews the report and makes the final decision.

This workflow used to take a junior analyst two days. The agent does it in 15 minutes. The analyst now reviews five times as many vendors, catching risks that would have been missed.

These examples are not theoretical. They are running in production at OpenAI, with real money and real decisions on the line. That is the most powerful validation Workspace Agents could have.

Part IV: The Enterprise Shift – Why Now?

OpenAI’s enterprise push is no secret. The company launched ChatGPT Enterprise in August 2023, added Team in early 2024, and has been steadily building out admin controls, compliance features, and API integrations. But until now, the value proposition has been largely about individual productivity – faster writing, better summaries, quicker code.

Workspace Agents change the equation. They are a team productivity product. And they solve a problem that has quietly cost enterprises millions over the last two years: the fragmentation of AI workflows.

“Every team has accumulated scattered prompts and half-built workflows,” said Priya Krishnamurthy, an AI transformation consultant who has advised a dozen Fortune 500 companies. “One person has a great prompt for summarizing customer tickets. Another has a Zapier automation for Slack alerts. A third has a custom GPT that no one else knows about. But no one has unified them. Workflows are tribal knowledge, not institutional assets.”

Workspace Agents provide a container for codifying that tribal knowledge. A sales team can build one agent that does what three different people were doing with three different tools. That agent can then be shared, audited, and improved over time. When a salesperson leaves, the agent stays. The knowledge doesn’t walk out the door.

This is why the timing matters. Enterprises have spent two years experimenting with AI. They have seen the potential. But they have also seen the chaos – the sprawl of unmanaged prompts, the duplication of effort, the security risks of unsanctioned tools. Workspace Agents arrive as a governance layer on top of that chaos.

“OpenAI is finally treating enterprises like enterprises,” Krishnamurthy added. “Not as collections of individuals, but as systems with processes, approvals, and compliance requirements. That’s a very different product. And it’s the one enterprises have been asking for.”

Part V: The Controlled Handshake – Permissions, Audits, and Safety

For all the talk of agency and automation, the feature that will determine Workspace Agents’ success in the enterprise is not what they can do – it is what they cannot do without permission.

OpenAI has built a multi-layered control system:

Data Usage Restrictions: Workspace admins can define which data sources an agent can access. An agent in the legal department cannot accidentally query sales data. An agent in HR cannot see engineering tickets unless explicitly granted.

Approval Gateways: For sensitive actions – sending emails, editing database entries, creating calendar invites – admins can require real-time human approval. The agent stops, notifies the designated approver, and waits. This is not a nice-to-have. It is a requirement for any regulated industry.

Role-Based Permissions: Enterprise and Edu admins can control which user groups can build agents, which can use existing agents, and which can share agents across teams. A junior employee can be given “use only” permissions. A manager can be given “build and share.” An admin can see everything.

Compliance API: For organizations with stringent logging requirements, OpenAI is providing a Compliance API that exports every agent configuration, update, and run. This allows security teams to monitor for unauthorized changes or unusual activity patterns.

Prompt Injection Protections: The announcement notes that agents have built-in safeguards against “misleading external content, including prompt injection attacks.” This is critical for agents deployed in Slack or other public channels, where a malicious user could theoretically attempt to hijack the agent’s instructions. OpenAI claims the agent will “follow your instructions strictly, without deviating from the intended logic.”

These controls suggest that OpenAI has learned from the shadow AI crisis of 2024-2025, where employees adopted unsanctioned tools faster than IT could respond. Workspace Agents are designed to be the sanctioned path – so compelling and well-governed that teams choose them over risky alternatives.

Part VI: The Pricing Question – Free Trial, Then Consumption

Workspace Agents are launching with a generous – and strategic – pricing model. From today until May 6, 2026, they are free for all eligible ChatGPT Business, Enterprise, Edu, and teacher plans. After that, they will convert to a “quota-based” consumption model.

OpenAI has not yet announced specific pricing, but internal discussions suggest a model similar to API tokens: enterprises purchase blocks of agent “runtime” or “actions,” with costs varying by the complexity of the tasks. Simple agents that run on a schedule (e.g., weekly reports) will be inexpensive. Complex agents that perform real-time code execution, database queries, and multi-step approvals will cost more.

The free trial period is clearly designed to drive adoption. OpenAI wants teams to build agents, fall in love with them, and then be unwilling to turn them off when the trial ends. It is a classic land-and-expand strategy – give away the razor, sell the blades.

For large enterprises, the cost will likely be marginal compared to the productivity gains. An agent that saves a sales team 50 hours a week is easily worth tens of thousands of dollars per year. But for smaller teams or educational institutions, pricing could be a barrier.

“The quota model makes sense – usage isn’t uniform – but OpenAI needs to be careful not to price out the mid-market,” said Vikram Sethi, an analyst at a boutique AI research firm. “If agents become too expensive, teams will go back to cobbling together Zapier, Make, and custom scripts. That’s worse for everyone.”

Part VII: The Road Ahead – Triggers, Dashboards, and Codex Apps

The launch of Workspace Agents is not a finish line. It is a foundation. OpenAI has already outlined several features coming in the “coming weeks”:

New triggers: Beyond scheduled and Slack-based activation, agents will soon be able to trigger on file uploads, database changes, or external webhooks. This will enable much richer automation – e.g., “when a new ticket arrives in Jira, run this agent.”

Enhanced dashboards: Admins will get visibility into which agents are being used, how often, and by whom. This will help teams identify which workflows are worth maintaining and which are abandoned.

More cross-tool actions: Currently, agents can call connected apps via actions. The roadmap includes deeper integrations with common enterprise tools – Salesforce, Workday, Confluence, and others.

Codex apps: Perhaps most intriguingly, OpenAI teases “Codex app support for Workspace Agents.” This suggests that third-party developers may eventually be able to build plugins or extensions specifically for agents, creating an ecosystem around workflow automation – something the GPT Store never achieved.

If OpenAI executes on this roadmap, Workspace Agents could evolve from a useful feature into a platform. That is the real prize: not just selling agent runtime, but becoming the operating system for enterprise AI workflows.

Conclusion: A Second Act with Better Odds

The GPT Store failed. That is not a secret. But failure in AI is rarely terminal – it is usually educational. OpenAI learned that solo, stateless, reactive custom models were a niche product, not a mass-market one. They learned that enterprises need governance, approvals, and persistence. And they learned that the real value of AI is not in answering questions, but in doing work – continuously, autonomously, and reliably.

Workspace Agents are the product of those lessons. They are not a rebrand of GPTs. They are a re-architecture. Codex, not ChatGPT. Stateful, not stateless. Proactive, not reactive. Shared, not solo. Governed, not wild.

The initial GPT Store didn’t stick. But agentic upgrades and an enterprise shift give Workspace Agents a genuine chance to find a better fit. The early internal usage at OpenAI is promising. The feature set addresses real pain points. And the pricing model, at least during the free trial, lowers the barrier to entry.

Of course, challenges remain. The “conversion tool” for old GPTs could be messy. Quota-based pricing could alienate smaller teams. And agents, like any automation, will sometimes fail in ways that frustrate users more than manual work ever did.

But for the first time since the GPT Store’s quiet fade, OpenAI has a credible answer to the question: “What comes after chat?” The answer is not a better chatbot. It is a coworker that never sleeps, never forgets, and always follows the rules – but asks for permission when it should.

That is not just an upgrade. That is an evolution. And for the millions of knowledge workers drowning in scattered prompts and half-built workflows, it may be exactly what they have been waiting for.

Reporting for this article was based on OpenAI’s official announcement, internal usage metrics shared with this publication, interviews with three enterprise beta testers, and analysis from AI workflow consultants. OpenAI declined to provide specific pricing details for the post-trial period but confirmed that a consumption-based model is planned.

J. J. Weaver is a technology and enterprise journalist. Their work has appeared in The Information, TechCrunch, and MIT Technology Review.

Your one-stop shop for automation insights and news on artificial intelligence is EngineAi.

Did you like this article? Check out more of our knowledgeable resources:

Watch this space for weekly updates on digital transformation, process automation, and machine learning. Let us assist you in bringing the future into your company right now