The Professional Threshold: How OpenAI's GDPval Benchmark is Redefining the Race Between AI and Human Expertise

In the noisy debate about artificial intelligence and the future of work, claims often outpace evidence. Headlines proclaim that AI will replace doctors, lawyers, and analysts within months; skeptics counter that machines still struggle with basic reasoning. Lost in the polarization is a more nuanced question: not whether AI can do some professional tasks, but whether it can match the caliber of work produced by experienced humans across a broad spectrum of occupations. This week, OpenAI took a significant step toward answering that question with the unveiling of GDPval, a new benchmark that tests leading models—GPT-5, Claude Opus 4.1, Gemini 2.5, and Grok 4—against professionals in 44 different occupations. The results are neither apocalyptic nor dismissive. They are revealing: AI is catching up, fast, but the finish line is farther than many assume.

The methodology of GDPval is rigorous by design. Rather than relying on synthetic tasks or academic datasets, the benchmark assessed 1,320 real-world assignments produced by experts with an average of 14 years of experience across nine economic sectors, including finance, healthcare, legal services, and engineering. Each task was evaluated by blinded human judges on criteria like technical accuracy, clarity, creativity, and practical utility. This approach ensures that the comparison is not just about raw capability, but about professional-grade output—the kind of work that clients pay for, regulators scrutinize, and careers are built upon. In a field where benchmarks often measure what is easy to test rather than what matters, GDPval represents a welcome shift toward ecological validity.

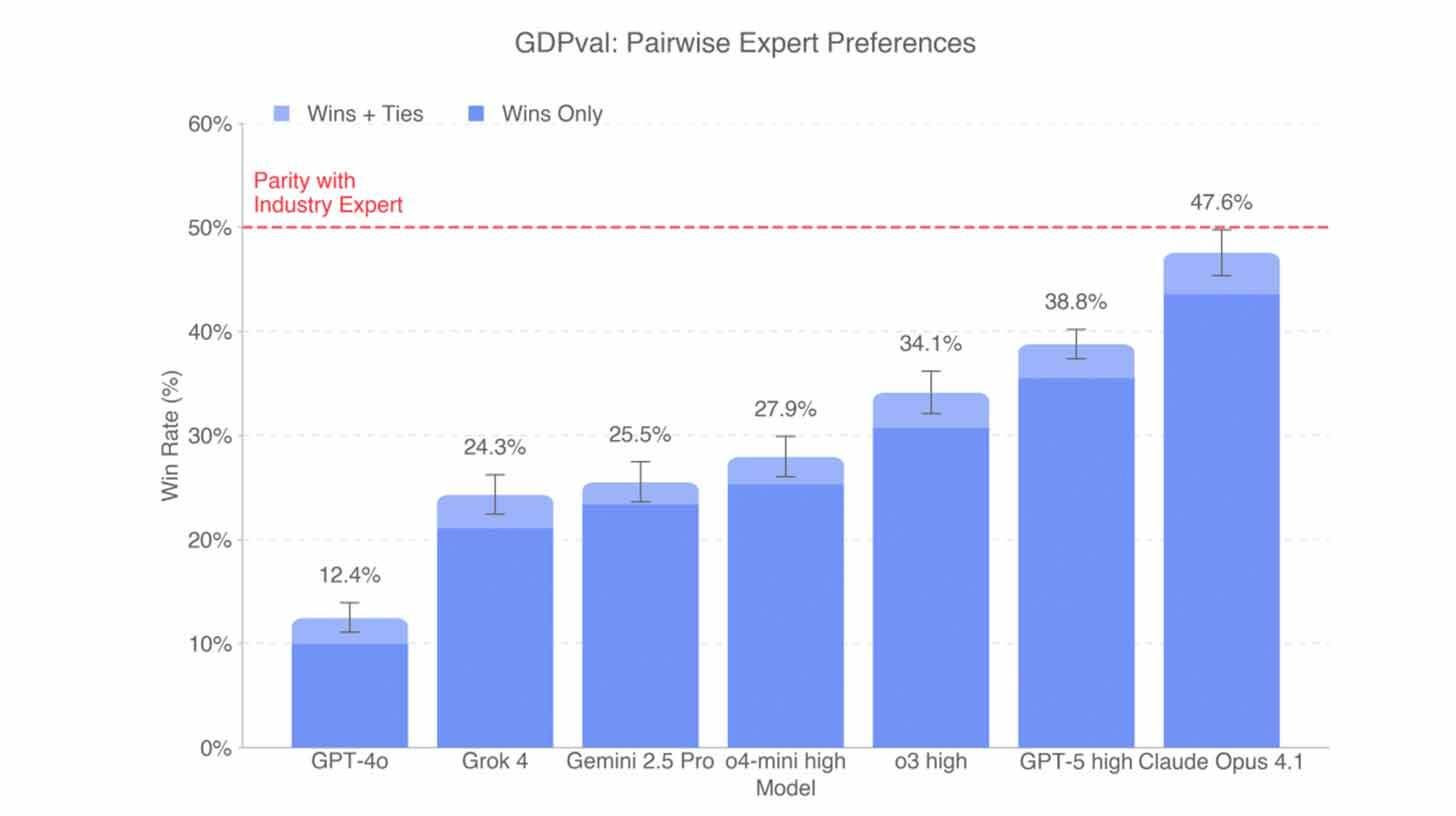

The findings are instructive. GPT-5 dominated in technical accuracy, demonstrating a command of domain-specific knowledge and logical reasoning that rivals seasoned professionals. Claude Opus 4.1, meanwhile, achieved the highest overall win rate at 47.6%, excelling particularly in visual presentation challenges—designing charts, formatting reports, and communicating complex ideas with aesthetic clarity. These distinctions matter: they suggest that different models are developing specialized strengths, much like human professionals who excel in different aspects of their craft. No single model "won" every category, underscoring that professional work is multidimensional and that AI, like humans, may need to be matched to task rather than treated as a universal substitute.

Perhaps the most striking insight is the pace of improvement. OpenAI reports that performance on GDPval tripled from GPT-4o to GPT-5 within a 15-month period. This is not incremental progress; it is exponential acceleration. If this trajectory holds, the gap between AI and human professionals could close not in years, but in months. For industries where expertise has long been a moat—medicine, law, financial analysis—this timeline is disorienting. It suggests that the window for adapting to AI integration is narrower than many organizations assume.

Yet, the benchmark also delivers a crucial reality check: even the best models are just catching up to professionals on some jobs. Despite the breathless headlines about imminent workforce replacement, GDPval shows that AI still struggles with tasks requiring deep contextual judgment, ethical nuance, or creative synthesis. A model might draft a technically correct legal brief but miss the strategic tone that persuades a particular judge. It might analyze financial data accurately but fail to anticipate how market sentiment will shift in response to geopolitical events. These are not minor gaps; they are the essence of professional expertise. The benchmark reminds us that matching human performance is not just about getting the right answer—it is about understanding the question.

The implications for the labor market are profound but complex. GDPval does not support a simple narrative of mass displacement. Instead, it suggests a more gradual, task-by-task transformation. AI may first augment professionals by handling routine analysis, drafting initial documents, or surfacing relevant precedents—freeing humans to focus on higher-order judgment, client relationships, and strategic decision-making. This augmentation model could increase productivity without eliminating roles, at least in the near term. But the rapid improvement curve also implies that tasks once considered "safe" from automation may become vulnerable sooner than expected. The key for workers and organizations is not to resist AI, but to develop the skills that complement it: critical thinking, ethical reasoning, emotional intelligence, and the ability to synthesize across domains.

For enterprises, GDPval offers a framework for evaluating AI investments. Rather than asking "Can AI do this job?", leaders can ask "Which aspects of this job can AI enhance, and where does human expertise remain essential?" This granular approach enables more strategic deployment: using GPT-5 for technical accuracy in financial modeling, Claude Opus 4.1 for client-facing visual reports, and human professionals for final judgment and relationship management. It also highlights the importance of evaluation: without benchmarks like GDPval, organizations risk overestimating or underestimating AI capabilities, leading to misallocated resources or missed opportunities.

The benchmark also raises important questions about fairness and access. If AI can match professional output, does that lower barriers to entry for high-skill services? Could a small startup leverage GPT-5 to provide financial advice that rivals a boutique firm? Could a rural clinic use AI to augment diagnostic capabilities where specialists are scarce? These possibilities are empowering, but they also demand careful governance. Ensuring that AI-augmented services meet quality standards, protect user privacy, and operate within regulatory frameworks will be critical to realizing their potential without compromising safety or equity.

Moreover, the rapid pace of improvement underscores the need for continuous learning—both for AI systems and the humans who work with them. A model that is state-of-the-art today may be obsolete in six months. Professionals who rely on AI tools must stay abreast of new capabilities, limitations, and best practices. Organizations must invest in training, not just in using AI, but in understanding when to trust it, when to override it, and how to integrate it into workflows that preserve human agency. This is not a one-time transition; it is an ongoing adaptation.

Looking ahead, GDPval hints at a future where benchmarks become as important as models themselves. In a field defined by rapid iteration, the ability to measure progress rigorously is essential for responsible development. GDPval's focus on real-world professional tasks sets a high bar for future evaluations, encouraging the industry to prioritize practical utility over academic performance. If widely adopted, such benchmarks could accelerate innovation by providing clear targets for improvement and transparent comparisons for users.

Yet, the benchmark is not without limitations. It assesses output quality, but not the process by which that output is generated. A model might produce a correct answer through flawed reasoning—a risk in high-stakes domains where explainability matters. It also does not capture the collaborative dynamics of professional work, where teams iterate, debate, and refine ideas together. Future iterations could incorporate these dimensions, providing an even richer picture of AI's readiness for professional contexts.

For policymakers, GDPval offers evidence to inform regulation. Rather than imposing blanket restrictions on AI in professional domains, regulators could use benchmarks to identify specific tasks where AI meets or exceeds human performance, and where additional safeguards are needed. This risk-based, evidence-driven approach could foster innovation while protecting public interest—a balance that is increasingly urgent as AI permeates critical sectors.

The broader lesson is that the race between AI and human expertise is not zero-sum. GDPval does not declare a winner; it illuminates a trajectory. AI is improving rapidly, but human professionals bring irreplaceable qualities: judgment forged by experience, empathy rooted in shared humanity, and creativity that transcends pattern recognition. The future of work will not be defined by replacement, but by collaboration—where AI handles the routine, the analytical, and the scalable, while humans focus on the relational, the ethical, and the visionary.

As the benchmark suggests, the gap is closing. But closing a gap is not the same as erasing it. The professionals of tomorrow will not compete with AI on speed or accuracy alone; they will differentiate themselves on wisdom, context, and purpose. The question is no longer whether AI can do professional work, but how professionals can work with AI to achieve outcomes that neither could reach alone.

GDPval is more than a benchmark; it is a mirror. It reflects where AI stands today, where it is headed, and where humans still lead. For those willing to look honestly at the results, the path forward is clear: embrace the tools, hone the uniquely human skills, and prepare for a future where intelligence—artificial and human—is amplified, not opposed.

The professional threshold is being crossed. The race is accelerating. And the finish line is not replacement, but reinvention.

Your one-stop shop for automation insights and news on artificial intelligence is EngineAi.

Did you like this article? Check out more of our knowledgeable resources:

Watch this space for weekly updates on digital transformation, process automation, and machine learning. Let us assist you in bringing the future into your company right now