The Swarm Awakens: How Claude Opus 4.6 Is Redefining AI Collaboration Through Multi-Agent Intelligence

Anthropic's latest flagship doesn't just process information—it orchestrates teams of AI agents, integrates seamlessly into enterprise workflows, and signals a shift from solitary chatbots to collaborative artificial workforces.

February 5, 2026, will be remembered as the day the paradigm shifted. While OpenAI's GPT-5.3-Codex grabbed headlines with its recursive self-improvement capabilities, Anthropic delivered an equally profound evolution: Claude Opus 4.6—a model that transforms AI from a solitary assistant into a coordinated team of specialized agents working in parallel. This isn't merely an upgrade; it's a fundamental reimagining of how artificial intelligence can tackle complex, multi-faceted problems.

The technical specifications immediately established Opus 4.6 as a powerhouse. For the first time, Anthropic's premium Opus tier features a 1 million token context window—matching what the Sonnet series previously offered but combining it with Opus's superior reasoning capabilities. This expansion enables processing approximately 750,000 words in a single pass, equivalent to analyzing entire code repositories, comprehensive technical documentation libraries, or hundreds of pages of research simultaneously.

But the context window expansion, impressive as it is, serves merely as the foundation for something more revolutionary: the introduction of "agent teams" in Claude Code. This feature allows multiple AI agents to collaborate simultaneously on a single project, dividing labor and coordinating efforts rather than handling tasks sequentially. One agent might architect the backend API while another constructs frontend components, a third writes comprehensive test suites, and a fourth reviews the collective output for security vulnerabilities—all operating in parallel within a unified session.

From Solo Performance to Symphony

The multi-agent architecture represents a decisive break from the traditional AI assistant model. For years, users interacted with chatbots as they would with a single intern: capable, responsive, but fundamentally limited by sequential processing. Agent Teams shatter this constraint through a lead agent and subagent model with centralized orchestration.

When initiating an Agent Teams session, a primary orchestrator assumes command. This lead agent decomposes complex tasks into parallelizable subtasks, delegates responsibilities to specialized subagents, monitors progress, handles inter-agent coordination, and synthesizes results. Each subagent operates as a semi-independent process with its own context window, focused exclusively on its assigned domain while remaining aware of teammates' activities.

This architecture elegantly solves the context window limitations that have long plagued AI coding tools. Rather than forcing a single agent to maintain awareness of an entire large codebase—inevitably leading to detail loss as conversations progress—each subagent maintains context only for its specific responsibility area. The backend agent knows the database schema and API contracts; the frontend agent understands component libraries and styling conventions; the testing agent focuses on coverage and edge cases.

The coordination layer distinguishes this from simply running multiple Claude Code instances. These agents communicate, flag dependencies, and avoid conflicts through a shared messaging infrastructure. If the frontend requires API types that the backend agent hasn't finalized, the orchestrator can sequence work appropriately or establish preliminary interfaces that evolve as dependencies resolve.

The Enterprise Infiltration

Anthropic's strategic vision extends beyond technical capabilities to deep ecosystem integration. Opus 4.6 launched alongside Claude's expansion into Microsoft Office environments—specifically PowerPoint and Excel—positioning the AI not as an external tool but as an embedded component of existing professional workflows.

In PowerPoint, Claude now operates within existing templates, reading slide masters, layouts, fonts, and color schemes to generate or revise content while maintaining brand consistency. Users can describe requirements—such as market sizing sections or competitive analysis—and Claude produces slides using correct layouts and formatting. The system creates native, editable charts and diagrams rather than inserting static images, supporting iterative refinement within live presentations.

The Excel integration offers equally sophisticated capabilities: pivot table editing, chart modifications, conditional formatting, and comprehensive spreadsheet manipulation. This targets the structured data workflows central to finance, operations, and analytics professionals who previously faced friction copying data between AI chat interfaces and spreadsheet applications.

These integrations reflect a mature understanding of enterprise requirements. By embedding directly within applications where work already occurs, Anthropic eliminates the context-switching penalty that has limited AI adoption in high-stakes professional environments. Compliance frameworks remain intact; brand controls stay enforced; governance structures require no modification. The AI becomes invisible infrastructure rather than visible interruption.

Benchmarking the Breakthrough

The quantitative performance validates Anthropic's architectural bets. On ARC-AGI-2—a benchmark testing novel reasoning and abstraction capabilities—Opus 4.6 achieved approximately 75.2%, representing a dramatic leap from previous generations and approaching the threshold of human-level performance on complex reasoning tasks. This matters because ARC-AGI-2 specifically tests capabilities not found in training data, measuring genuine generalization rather than pattern matching.

On SWE-bench Verified, which evaluates real-world software engineering tasks across multiple programming languages, Opus 4.6 reached 80.8%—establishing state-of-the-art performance on practical coding challenges. The Terminal-Bench 2.0 score of 62.7% demonstrated sophisticated command-line proficiency essential for autonomous development workflows.

The OSWorld-Verified benchmark, testing AI control of desktop computing environments, yielded 72.7%—indicating near-human capability in navigating graphical interfaces, manipulating files, and operating standard software applications. This desktop automation competence, combined with the Office integrations, creates a continuum of capability from deep code analysis to everyday productivity tasks.

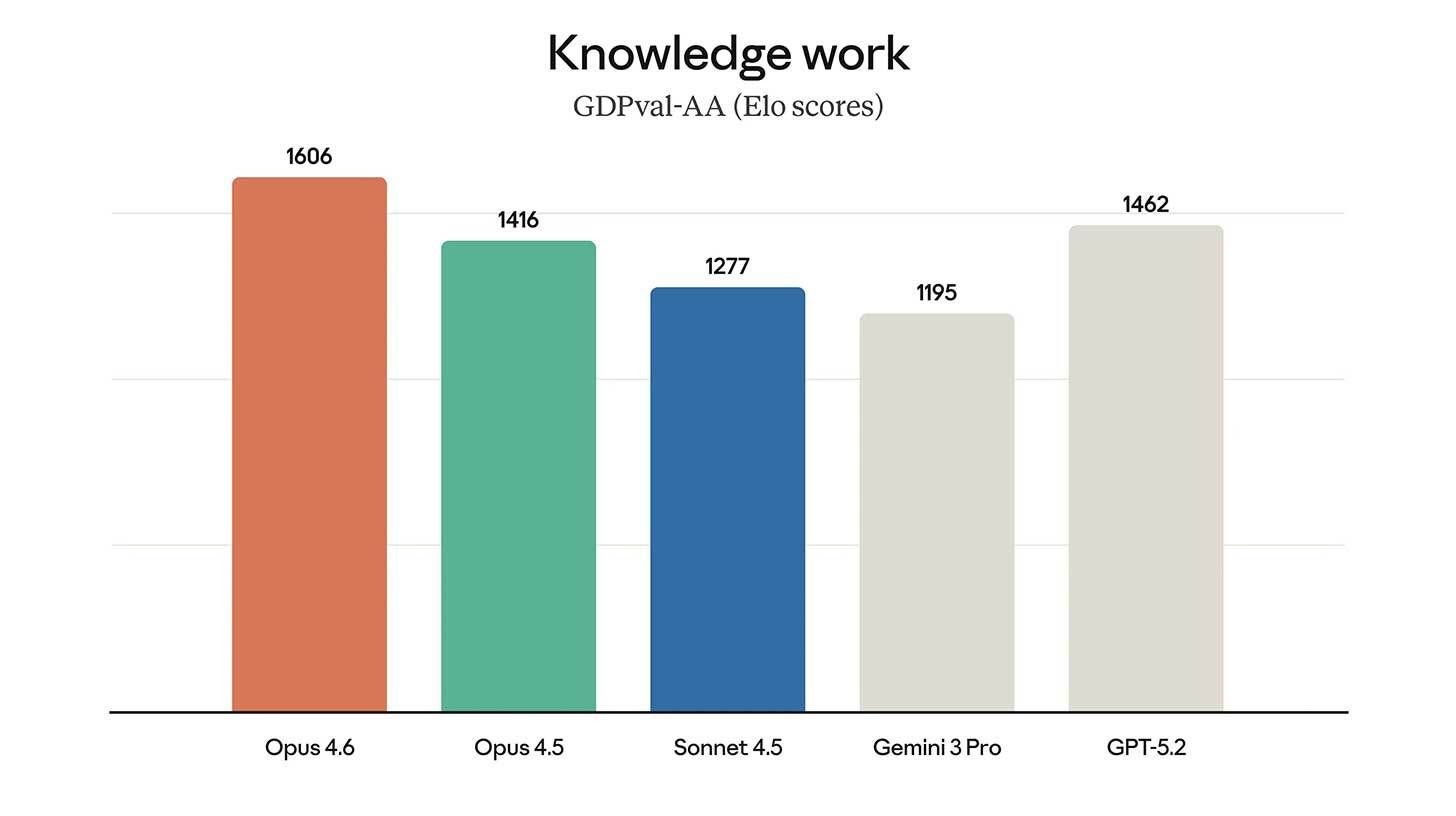

Particularly notable is the model's performance on GDPval-AA, which evaluates economically valuable professional work including financial analysis, document generation, and presentation creation. Opus 4.6 achieved 1559 Elo on this metric, validating Anthropic's positioning of the model as a general work agent rather than merely a coding specialist.

The Self-Referential Horizon

The timing of Opus 4.6's release—coinciding precisely with OpenAI's GPT-5.3-Codex announcement—created a revealing juxtaposition. Both companies unveiled models capable of contributing to their own development. While OpenAI emphasized Codex's role in debugging training pipelines, Anthropic highlighted Opus 4.6's capacity for alignment research and capability improvements through careful reasoning about AI systems.

Dario Amodei, Anthropic's CEO, has been notably explicit about this trajectory, confirming that Claude is actively involved in designing its successor. This transparency about recursive self-improvement—once confined to theoretical discussions—now characterizes mainstream AI development strategies. The competitive dynamic has shifted from who can train the largest model to who can most effectively harness AI systems to accelerate their own evolution.

The "agent teams" capability takes on additional significance in this context. If a single AI agent can contribute to research and development, a coordinated team of specialized agents potentially multiplies this contribution. One agent might analyze safety considerations while another optimizes training efficiency, a third examines evaluation methodologies, and a fourth synthesizes findings into actionable improvements—all operating simultaneously with awareness of each other's insights.

Architectural Innovations Under the Hood

Several technical enhancements enable Opus 4.6's expanded capabilities. The adaptive thinking feature allows the model to dynamically adjust its reasoning depth based on task requirements—applying extended analysis for complex problems while maintaining responsiveness for routine queries. This optimization prevents the uniform computational expense that has made previous frontier models economically challenging at scale.

Context compaction, introduced in beta, enables theoretically infinite conversations through automatic server-side summarization. When exchanges approach context limits, the system generates compressed summaries of earlier dialogue, preserving essential information while freeing token capacity for continued interaction. For agent workflows involving extensive tool use and lengthy reasoning chains, this mechanism maintains coherence without manual context management.

The API now supports outputs up to 128,000 tokens—double the previous maximum—enabling comprehensive document generation, detailed code implementations, and extensive analysis reports without the fragmentation that previously complicated long-form outputs. Combined with the 1 million token input window, this creates an asymmetric context model suited for absorbing massive information volumes while producing substantial, coherent outputs.

Pricing reflects these capability tiers: standard rates of $5 per million input tokens and $25 per million output tokens apply for contexts under 200,000 tokens, while exceeding this threshold triggers long-context pricing at $10 and $37.50 respectively. This structure incentivizes efficient use while ensuring access to expanded capabilities when genuinely required.

The Convergence of Competition

The synchronized releases from Anthropic and OpenAI on February 5th revealed the true competitive frontier. Public attention had recently fixated on peripheral controversies—Sam Altman's Super Bowl advertisement decisions, social media disputes between tech luminaries, marketing positioning—but these distractions dissolved against the magnitude of simultaneous technical breakthroughs.

Both companies now explicitly acknowledge that their models contribute to developing successor systems. Both emphasize agentic capabilities extending beyond chat interfaces to active computer control and multi-step workflow automation. Both recognize that the next generation of AI will be shaped significantly by the current generation's ability to reason about AI development itself.

This convergence suggests the industry has entered a new phase where linear capability projections become inadequate. When AI systems become primary contributors to their own evolution—whether through debugging training infrastructure like Codex or conducting alignment research like Claude—improvement curves may accelerate beyond historical patterns. The "AI is hitting a wall" narrative, prevalent in certain skeptical quarters, faces mounting empirical contradiction.

Implications for the Workforce

The multi-agent collaboration model carries profound implications for knowledge work organization. Traditional AI assistants augmented individual productivity; Agent Teams potentially augment team productivity. A developer might orchestrate multiple Claude agents as they would coordinate human colleagues—assigning specific responsibilities, reviewing integrated outputs, and maintaining architectural oversight while delegating implementation details.

This shifts the human role from execution to orchestration. Rather than writing code line-by-line, the developer defines objectives, constraints, and quality standards, then guides agent teams toward acceptable solutions. The skill set evolves from syntax mastery toward architectural thinking, requirement specification, and quality judgment.

For enterprises, the Office integrations signal that this transformation extends beyond technical roles. Marketing teams might deploy agent teams to generate campaign materials across formats—presentations, spreadsheets, documentation—simultaneously while maintaining brand consistency. Financial analysts could coordinate agents for data gathering, model building, visualization, and report generation. The pattern of parallel specialization applies across domains.

Navigating the Risks

Anthropic's safety approach with Opus 4.6 emphasizes expanded testing and defensive applications. The company reports maintaining safety profiles comparable to or better than other frontier models, with low rates of misaligned behavior in internal evaluations. Testing expansion includes new probes for harmful cyber-related responses and evaluations tied to user well-being.

Notably, Anthropic emphasizes cyberdefensive use cases—identifying and patching vulnerabilities in open-source software—positioning the model's capabilities toward protective rather than destructive applications. This framing acknowledges the dual-use nature of advanced AI coding abilities while attempting to establish norms favoring defensive security research.

The Trusted Access for Cyber program, similar to OpenAI's approach with Codex, gates advanced capabilities behind verification requirements, ensuring sophisticated tools reach only approved researchers with legitimate defensive purposes. These layered safeguards reflect growing recognition that capability advancement must parallel safety infrastructure development.

The Path Forward

Claude Opus 4.6 represents more than incremental improvement; it embodies a architectural philosophy treating AI as collaborative infrastructure rather than conversational interface. The multi-agent paradigm, deep enterprise integration, and recursive development potential collectively suggest a trajectory toward AI systems that function as genuine team members—specialized, coordinated, and capable of sustained autonomous operation.

The model's performance across benchmarks—from ARC-AGI-2's reasoning tests to OSWorld's desktop automation to GDPval's professional task evaluation—demonstrates breadth matching its architectural ambition. This isn't a narrow coding tool or a chatbot with delusions of grandeur; it's a general-purpose reasoning system adapted for practical deployment in complex organizational environments.

As the competitive dynamic between Anthropic and OpenAI intensifies around recursive self-improvement and agentic capabilities, the beneficiaries extend beyond the companies themselves to the broader ecosystem of developers, enterprises, and eventually consumers who will inherit the capabilities these systems pioneer. The "big day of dueling releases" marked not just product announcements, but the emergence of AI systems sophisticated enough to participate in their own advancement—a threshold crossing that redefines what's possible and what's coming next.

Claude Opus 4.6 doesn't just answer questions or write code. It coordinates intelligence, integrates into workflows, and contributes to the research that will produce its successors. In doing so, it offers a glimpse of a future where artificial intelligence isn't merely a tool we use, but a collaborator we work alongside—and one that increasingly helps design the future it will inhabit.

Your one-stop shop for automation insights and news on artificial intelligence is EngineAi.

Did you like this article? Check out more of our knowledgeable resources:

Watch this space for weekly updates on digital transformation, process automation, and machine learning. Let us assist you in bringing the future into your company right now