The Trust Paradox: Why Developers Are Embracing AI While Keeping One Hand on the Wheel

In the rapidly evolving landscape of software development, a fascinating contradiction has emerged. According to Google Cloud's latest annual DORA research on the "State of AI-assisted Software Development," 90% of developers now use AI tools—a near-universal adoption rate that signals a fundamental shift in how code is written. Yet, despite this widespread reliance, confidence in AI outputs remains unexpectedly low. Thirty percent of developers report being "a little" or "not at all" confident in the code their AI assistants generate. This is not a story of blind faith in automation; it is a story of pragmatic adoption, where developers are harnessing AI's productivity gains while maintaining human judgment as the ultimate arbiter of quality. In an industry often polarized between AI evangelism and skepticism, this nuanced reality offers a more mature, more sustainable path forward.

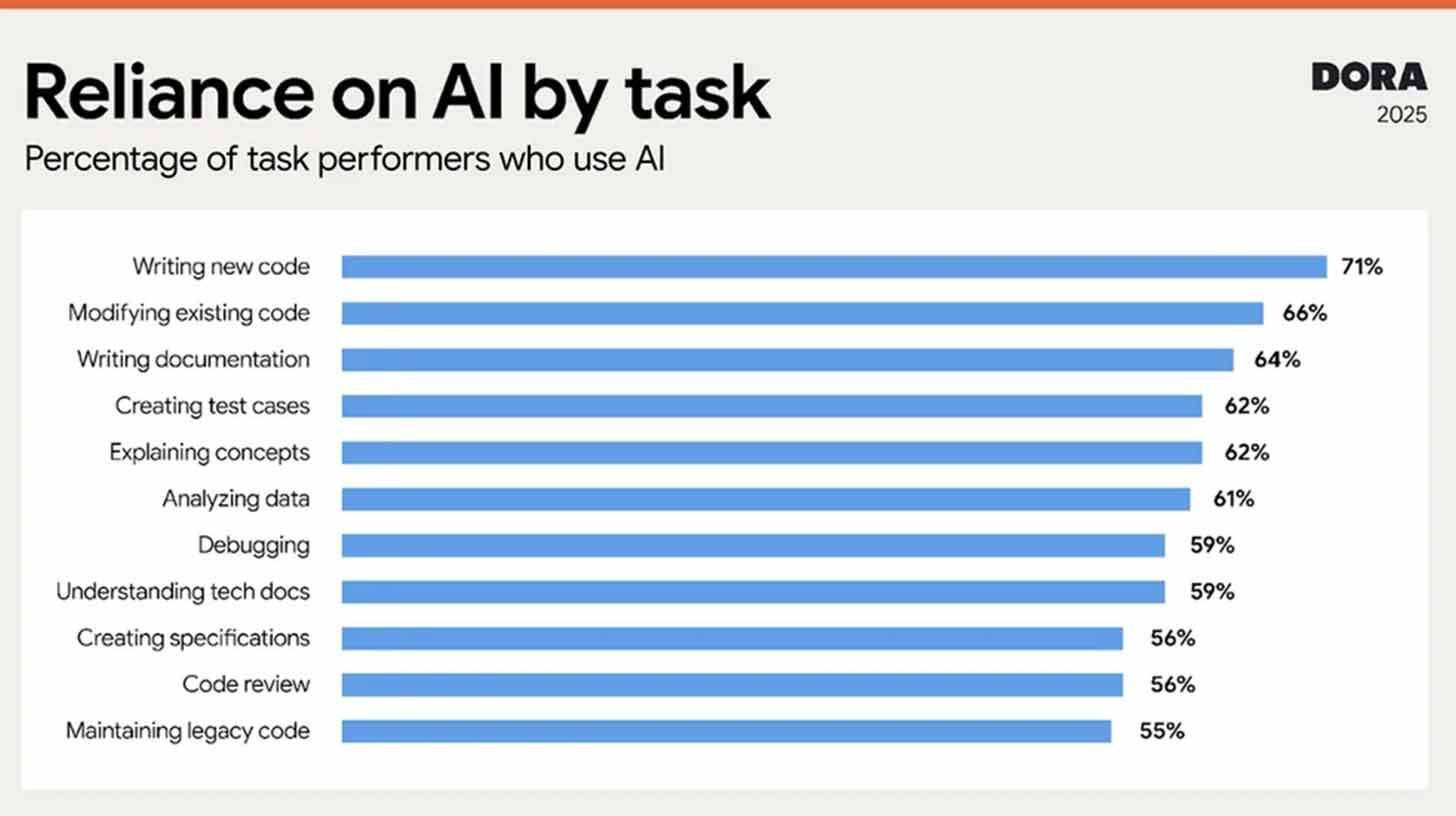

The data reveals a workforce in transition. Developers now spend almost two hours a day interacting with AI helpers—tools that suggest code, debug errors, explain complex functions, and generate boilerplate. This is not casual experimentation; it is deep integration into daily workflows. Yet the same developers who rely on these tools express significant reservations about their outputs. This apparent contradiction dissolves when viewed through the lens of professional craftsmanship. For developers, code is not just functional; it is a form of expression, a testament to skill, and a liability if flawed. Trusting an AI to write production-ready code without scrutiny feels like outsourcing judgment itself. The result is a hybrid workflow: AI accelerates the mechanical aspects of development—syntax, patterns, repetitive tasks—while humans retain oversight for architecture, logic, and edge cases. This is not skepticism as resistance; it is skepticism as rigor.

The productivity benefits, however, are undeniable and substantial. Despite their reservations, 59% of developers report improvements in code quality when using AI assistants, and 80% cite increased efficiency. These metrics suggest that AI's value lies not in replacing human expertise, but in amplifying it. By handling the tedious, the predictable, and the time-consuming, AI frees developers to focus on higher-order problems: system design, user experience, and strategic innovation. A developer might use AI to generate a standard API endpoint in seconds, then spend that saved time refining error handling or optimizing database queries. The net effect is not just faster coding; it is better coding, enabled by a partnership where each party plays to its strengths.

Google's response to this evolving dynamic is the DORA AI Capabilities Model, a framework outlining seven best practices for organizations seeking to leverage AI's benefits responsibly. While the specifics of the model are detailed in Google's full report, its core philosophy is clear: successful AI adoption requires more than just deploying tools. It demands intentional processes for evaluation, governance, and continuous learning. Organizations must establish clear guidelines for when and how AI-generated code is reviewed, invest in training developers to use AI effectively, and create feedback loops that improve both human and machine performance over time. This is not a checklist; it is a cultural shift—one that recognizes AI as infrastructure, not just instrumentation.

The broader implication of this research is a maturation of the developer-AI relationship. Early narratives around AI in software development often oscillated between utopian visions of fully autonomous coding and dystopian fears of skill erosion. The DORA findings suggest a more grounded reality: AI is becoming a standard part of the developer's toolkit, but its role is collaborative, not substitutive. This mirrors broader trends in professional fields where AI augments rather than replaces expertise—radiologists using AI to flag potential anomalies, lawyers using it to surface relevant precedents, writers using it to overcome blank-page syndrome. In each case, the human remains the final decision-maker, applying context, ethics, and judgment that machines cannot replicate.

For engineering leaders, this research offers actionable insights. The high adoption rate indicates that developers are already finding value in AI tools; the low confidence signals that support structures are needed to maximize that value safely. Investing in the DORA AI Capabilities Model—providing clear guidelines, fostering a culture of critical evaluation, and measuring outcomes beyond raw velocity—can help teams harness AI's potential while mitigating risks. Moreover, the productivity gains reported suggest that AI adoption is not just a technical decision but a strategic one: teams that integrate AI thoughtfully may gain significant competitive advantages in speed, quality, and innovation.

Yet, the trust gap itself may be a feature, not a bug. The fact that developers remain skeptical of AI outputs—even as they rely on them—suggests a healthy professional caution. In software, where a single bug can have cascading consequences, blind trust is dangerous. The developers who question AI suggestions are the ones who catch subtle logic errors, anticipate edge cases, and ensure that code aligns with broader system goals. This skepticism is not an obstacle to adoption; it is a safeguard that enables responsible adoption. It ensures that AI serves as a powerful assistant, not an unquestioned authority.

Looking ahead, the trajectory is clear: AI will continue to permeate software development, but its role will be defined by collaboration, not replacement. As models improve and developers gain fluency in prompting and evaluating AI outputs, confidence may gradually increase. But the core principle—that human judgment remains essential—is unlikely to change. The most effective developers of the future will not be those who delegate the most to AI, but those who best integrate AI into a disciplined, thoughtful workflow.

For the broader technology industry, this research offers a template for AI adoption beyond coding. The pattern of high usage coupled with measured trust may replicate in other domains where AI augments professional expertise. The lesson is that successful integration requires acknowledging both the capabilities and the limitations of the technology—and designing workflows that leverage the former while guarding against the latter.

The DORA findings also underscore the importance of continuous research and iteration. As AI tools evolve, so too must our understanding of how they impact workflows, quality, and team dynamics. Google's commitment to annual research provides a valuable longitudinal lens, allowing the industry to track trends, identify emerging challenges, and adapt best practices accordingly. This evidence-based approach is critical in a field where hype often outpaces reality.

Ultimately, the story told by the DORA research is one of pragmatic progress. Developers are not waiting for AI to become perfect before using it; they are using it now, critically and creatively, to build better software faster. They are not surrendering their expertise to algorithms; they are augmenting that expertise with powerful new tools. And they are not ignoring the risks; they are managing them through vigilance, review, and a commitment to quality.

The future of software development is not human versus machine. It is human with machine—where AI handles the routine, and humans focus on the remarkable. The trust paradox is not a contradiction to be resolved; it is a balance to be maintained. And in that balance lies the path to sustainable, responsible, and transformative innovation.

The tools are here. The data is clear. The question is no longer whether to adopt AI, but how to adopt it wisely. For developers and engineering leaders ready to navigate that question, the DORA research offers both a mirror and a map: a reflection of where the industry stands today, and a guide for where it can go tomorrow. The code of the future is being written now—with AI as a co-pilot, and human judgment firmly in command.

Your one-stop shop for automation insights and news on artificial intelligence is EngineAi.

Did you like this article? Check out more of our knowledgeable resources:

Watch this space for weekly updates on digital transformation, process automation, and machine learning. Let us assist you in bringing the future into your company right now