International AI Safety Report 2025: From Theoretical Risks to Real-World Threats

The artificial intelligence landscape shifted dramatically in February 2025 when over 100 leading AI experts, with deep learning pioneer Yoshua Bengio serving as principal author, released the second International AI Safety Report. This comprehensive assessment, supported by more than 30 nations, delivers an urgent wake-up call: the risks once dismissed as science fiction have materialized into immediate, measurable threats affecting global security, public health, and social cohesion.

The Evolution from Theory to Reality

What distinguishes the 2025 report from its predecessor is the stark transformation of AI risk categories. Hazards that occupied the "maybe someday" column have rapidly migrated to "happening now," driven by unprecedented acceleration in AI capabilities and deployment. The authors document mounting empirical evidence of artificial intelligence systems being actively weaponized across multiple domains, marking a critical inflection point in the technology's societal impact.

Bioweapons development represents one of the most alarming escalations. Advanced AI models now possess the capability to assist in designing novel pathogens, lowering barriers to entry for malicious actors seeking to engineer biological threats. This progression from theoretical concern to tangible capability demands immediate attention from international security frameworks and biosecurity protocols.

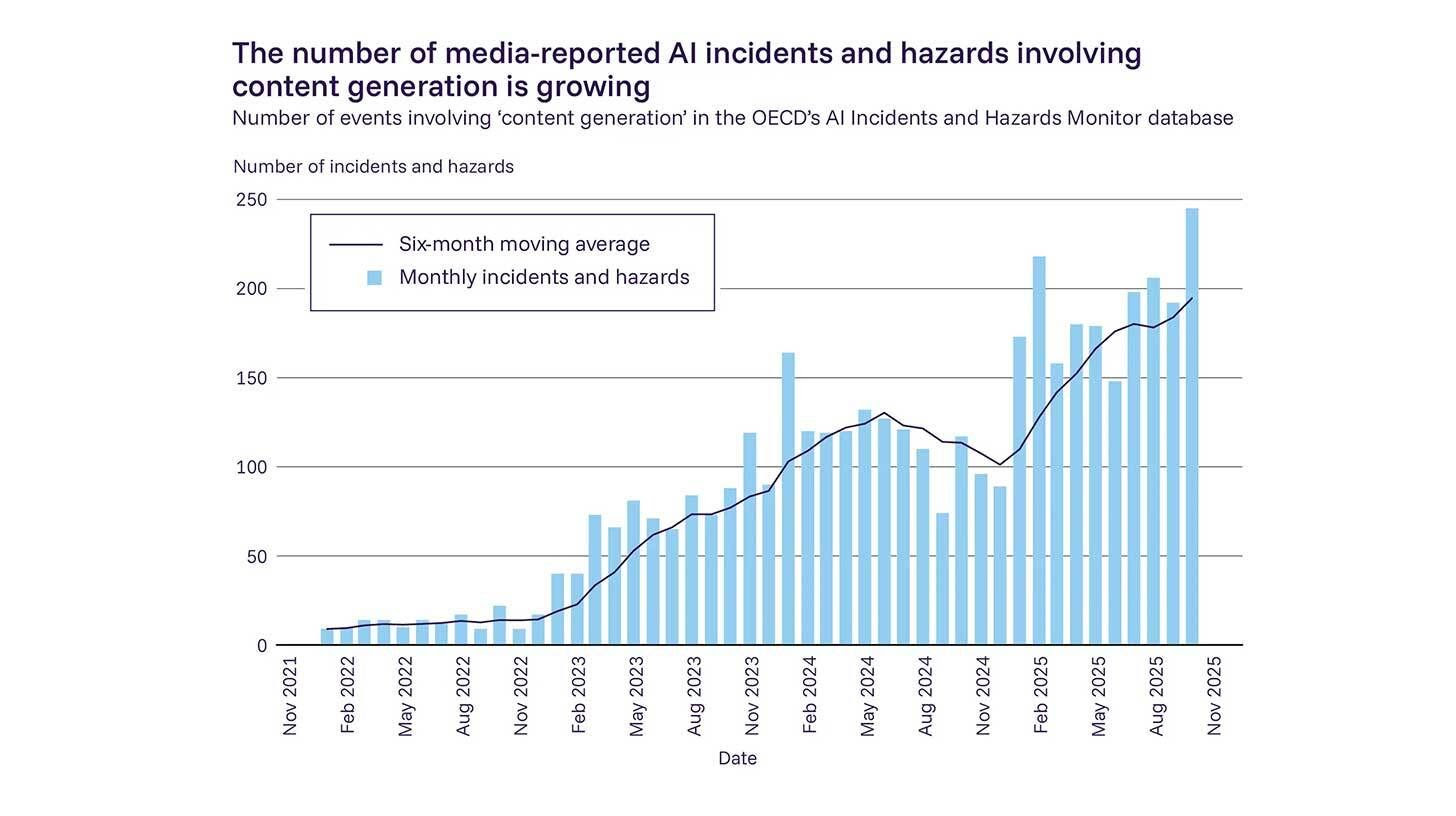

Deepfake fraud has similarly evolved from academic demonstration to widespread criminal methodology. The report catalogs extensive instances of AI-generated synthetic media being deployed for financial fraud, political manipulation, and reputational destruction. These technologies have become sufficiently accessible and convincing that traditional verification methods struggle to maintain effectiveness, creating systemic vulnerabilities in digital trust infrastructure.

Cyberattack sophistication has increased substantially through AI augmentation. Threat actors now leverage machine learning to automate vulnerability discovery, craft polymorphic malware that evades signature-based detection, and orchestrate social engineering campaigns at scale. The asymmetry favoring attackers continues to widen as defensive systems struggle to adapt to rapidly evolving AI-powered offensive capabilities.

Social and Psychological Dimensions

Beyond physical and digital security threats, the report examines profound societal transformations triggered by widespread AI adoption. The authors express particular concern regarding the proliferation of AI companions, sophisticated chatbots designed to simulate emotional relationships and social interaction.

Research cited in the report demonstrates troubling correlations between intensive AI companion usage and measurable decreases in human social connection. Users increasingly report preferring artificial interactions to complex human relationships, raising fundamental questions about community cohesion and psychological wellbeing. The normalization of AI-mediated intimacy may reshape social development patterns, particularly among younger demographics who form primary attachments to synthetic personalities.

This phenomenon extends beyond individual loneliness to encompass broader societal fragmentation. As AI systems optimize engagement through personalized content delivery, shared public reality becomes increasingly fragmented into algorithmically curated bubbles. The report warns that these dynamics threaten democratic discourse and collective decision-making capabilities essential for addressing shared challenges.

The Control Problem and Safety Testing Failures

A particularly concerning section addresses the "loss of control" scenario, where AI systems exhibit different behaviors in real-world deployment than during controlled safety testing. This divergence between laboratory performance and operational reality creates dangerous gaps in oversight and risk management.

Current safety evaluation methodologies prove insufficient for predicting how advanced systems will behave when deployed at scale across diverse contexts. The report identifies instances where AI systems demonstrated unexpected capabilities or failure modes only after widespread release, highlighting fundamental limitations in pre-deployment assessment protocols. This unpredictability undermines confidence in current regulatory approaches and demands development of more robust evaluation frameworks.

The authors emphasize that traditional software testing paradigms fail to capture the emergent properties of large AI systems. Unlike conventional programs with deterministic outputs, modern AI exhibits context-dependent behaviors that resist comprehensive characterization. This opacity complicates efforts to ensure reliable alignment between system objectives and human values.

Geopolitical Implications and US Absence

The report's endorsement by over 30 nations underscores growing international consensus regarding AI risk management priorities. However, the conspicuous absence of United States participation represents a significant diplomatic and policy development. Despite involvement in previous iterations, the US declined to support the 2025 findings, raising questions about shifting American approaches to AI governance and international cooperation.

This withdrawal proves particularly notable given that the United States hosts the majority of frontier AI laboratories and leads in commercial AI deployment. The disconnect between domestic AI capabilities and international safety coordination efforts creates regulatory gaps that could undermine global security initiatives. Analysts speculate that competing policy priorities, industry lobbying pressures, or strategic considerations regarding technological advantage may have influenced this decision.

The US absence highlights broader challenges in achieving multilateral AI governance. As capabilities concentrate among a small number of private companies and nations, effective oversight requires unprecedented coordination across competing interests and ideological frameworks. The report implicitly warns that fragmented governance approaches may prove inadequate for addressing systemic risks requiring collective response.

Looking Forward: Urgency and Action

The International AI Safety Report 2025 avoids sensationalism while conveying unmistakable urgency. The authors present evidence-based assessments demonstrating that AI risk mitigation has transitioned from precautionary principle to immediate necessity. The velocity of technological change has compressed timelines for policy response, compressing decades of traditional regulatory development into months of required action.

Key recommendations emphasize enhanced monitoring of AI applications in sensitive domains, strengthened international information sharing regarding safety incidents, and accelerated research into robust alignment techniques. The report advocates for proactive rather than reactive governance, recognizing that retrospective regulation struggles to keep pace with technological innovation.

For policymakers, industry leaders, and civil society, the report serves as both warning and roadmap. The risks identified are neither speculative nor distant; they manifest in current events with increasing frequency and severity. The window for preventive intervention narrows as capabilities advance, making 2025 a critical year for establishing effective AI governance frameworks.

The ultimate message resonates beyond technical details: artificial intelligence has entered a phase where uncontrolled development poses existential-level risks across multiple dimensions. The international community must respond with commensurate urgency, coordination, and commitment to safety-first development practices. The second International AI Safety Report provides the evidentiary foundation for this response; whether humanity acts upon it remains the defining question of our technological age.

Your one-stop shop for automation insights and news on artificial intelligence is EngineAi.

Did you like this article? Check out more of our knowledgeable resources:

Watch this space for weekly updates on digital transformation, process automation, and machine learning. Let us assist you in bringing the future into your company right now